In a sweeping push to rein in misinformation and recalibrate the tone of broadcast journalism, the Centre has simultaneously intensified its digital fact-checking operations while tightening oversight on television news content—two parallel interventions that together signal a sharper regulatory posture in India’s information ecosystem.

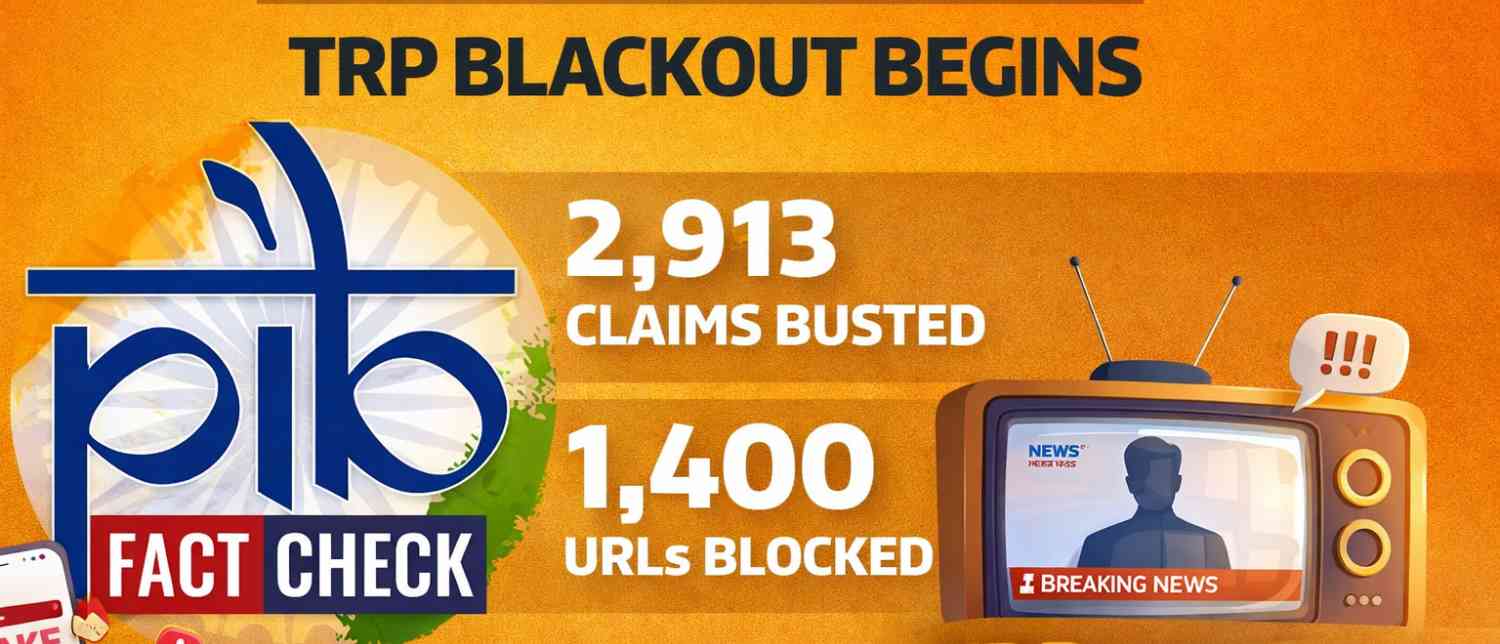

At the heart of this effort is the government’s Fact Check Unit (FCU) under the Press Information Bureau (PIB), which has emerged as a central instrument in countering what officials describe as a surge in fake narratives, including deepfakes and AI-generated misinformation. According to official data placed in Parliament, the unit has so far issued 2,913 fact-checks, debunking a wide spectrum of false claims linked to the Union government.

These fact-checks span misleading videos, fabricated notifications, doctored letters, spoofed websites and manipulated social media content. The FCU verifies such claims through authorised government sources before publishing corrections across its digital platforms, including X, Facebook, Instagram, Telegram, Threads and WhatsApp channels.

A digital battlefield: misinformation, deepfakes and “Operation Sindoor”

The scale of intervention intensified during what the government referred to as “Operation Sindoor,” a period marked by the rapid circulation of hostile and misleading narratives online. During this phase, the PIB’s Fact Check Unit not only issued clarifications but also triggered the blocking of over 1,400 URLs across digital platforms.

Officials told Parliament that the operation involved identifying “anti-India narratives” and acting swiftly to ensure “accurate public communication,” highlighting the growing concern within the government over information warfare in the digital age.

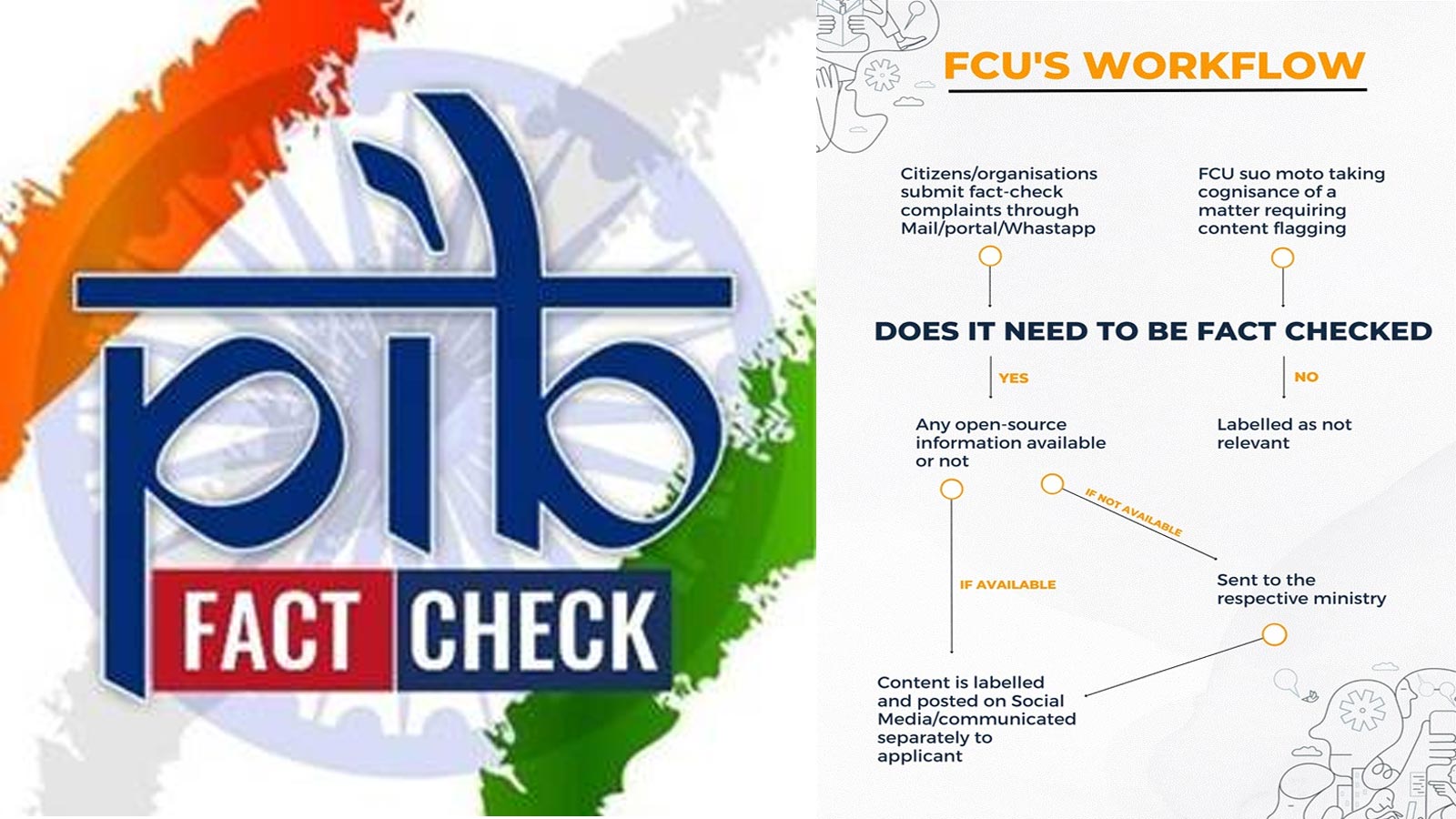

The FCU’s mandate extends beyond reactive debunking. Established in November 2019, the unit was designed as a proactive institutional mechanism to tackle misinformation related to government policies, schemes and announcements. Its workflow involves citizen participation—users can submit suspicious content via WhatsApp, email or a web portal—after which queries are vetted through multiple layers of verification involving open-source intelligence and official confirmations.

Content examined by the unit is ultimately classified into three categories: fake, misleading or true, depending on the outcome of verification.

The broader concern, as articulated in official documentation, is that unchecked misinformation can erode trust in democratic institutions, inflame social and political tensions, and even endanger lives.

Legal backing and compliance architecture

The FCU operates within the framework of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, which lay down a code of ethics for digital publishers and provide a three-tier grievance redressal mechanism for violations.

Officials emphasised that this institutional backing is crucial in ensuring that digital media platforms adhere to standards of accuracy and accountability, particularly as misinformation becomes more sophisticated with the use of AI tools.

The government has also increasingly encouraged public participation in flagging dubious content, positioning the fight against misinformation as a collaborative effort between citizens and institutions.

From digital misinformation to TV sensationalism

Even as the FCU expands its digital footprint, the government has turned its attention to another arena: television news.

In a rare and consequential move, the Ministry of Information and Broadcasting directed the temporary suspension of Television Rating Points (TRPs) for news channels for four weeks, citing concerns over sensational and speculative reporting.

The decision, disclosed in Parliament, comes against the backdrop of coverage during Operation Sindoor and global conflict situations, where certain channels were found airing what the government described as “unwarranted sensational and speculative content.”

Authorities warned that such reporting could trigger panic among viewers, especially those with family or personal connections in conflict zones.

TRPs—used as a key metric for viewership and advertising revenue—have long driven competition among broadcasters. By withholding these ratings, the government aims to remove the incentive for sensationalism driven by the race for higher viewership.

Industry response and implications

According to official statements, the directive to suspend TRPs has been widely accepted by stakeholders, with no objections raised so far.

The move, however, carries significant implications for the broadcast industry. Without TRP data, advertisers lose a primary benchmark for allocating spending, potentially affecting revenue streams for news channels. At the same time, the government has framed the step as a temporary “precautionary measure” intended to promote responsible journalism during sensitive periods.

The directive draws authority from the Policy Guidelines for Television Rating Agencies (2014), under which agencies like the Broadcast Audience Research Council (BARC) are required to comply with government directions.

Officials indicated that the suspension could be extended if necessary, depending on how news coverage evolves during the monitoring period.

A coordinated push for information discipline

Taken together, the expansion of the PIB’s fact-checking operations and the TRP suspension reflect a coordinated strategy: addressing misinformation at its source in the digital ecosystem while simultaneously curbing its amplification through broadcast media.

The government’s approach underscores a growing recognition that misinformation today is not confined to a single platform. It travels across formats—originating on social media, gaining traction through digital networks, and often being amplified on television.

By issuing 2,913 fact-checks, blocking over 1,400 URLs, and temporarily halting TRP-driven competition, authorities appear to be attempting a structural intervention—one that targets both the supply and demand sides of misinformation.

The road ahead

Yet, the measures also raise broader questions about the balance between regulation and editorial independence, particularly in a media landscape where speed often competes with accuracy.

For now, the government maintains that the interventions are limited, targeted and driven by public interest, aimed at preventing panic, preserving trust and ensuring that information reaching citizens is verified and responsible.

As misinformation becomes more technologically sophisticated and media ecosystems more interconnected, India’s dual-track response—combining real-time fact-checking with broadcast regulation—may well serve as a template for how governments navigate the complex terrain of the information age.

With inputs from agencies

Image Source: Multiple agencies

© Copyright 2025. All Rights Reserved. Powered by Vygr Media.